Welcome to Smartproxy Blog!

Build knowledge on everything proxies, or pick up some dope ideas for your next project – this is just the right place for that.

In 2006, British mathematician Clive Humby coined the phrase "data is the new oil." He pointed out t...

Dominykas Niaura

Jul 03, 2024

10 min. read

Ethical Web Data Collection Initiative (EWDCI) Publishes a Q&A with Smartproxy CEO Vytautas Savickas

Ethical Web Data Collection Initiative (EWDCI), an international consortium of web data aggregation ...

Dominykas Niaura

Jun 19, 2024

2 min. read

Online marketplaces are beloved for offering a wide array of goods, often from things we don’t need ...

Dominykas Niaura

Jun 19, 2024

10 min. read

Excel is an incredibly powerful data management and analysis tool. But did you know that it can also...

Zilvinas Tamulis

May 27, 2024

7 min. read

Imagine you're on a thrilling mission in the digital world and need to access restricted areas, gath...

Mariam Nakani

May 25, 2024

5 min. read

Imagine this scenario: you’re bored at work with nothing to do. You decide to check out Reddit for a...

Zilvinas Tamulis

Apr 26, 2024

8 min. read

In web development, skillfully handling HTTP requests¹ is key for interacting with APIs, testing end...

Martin Ganchev

Apr 24, 2024

15 min. read

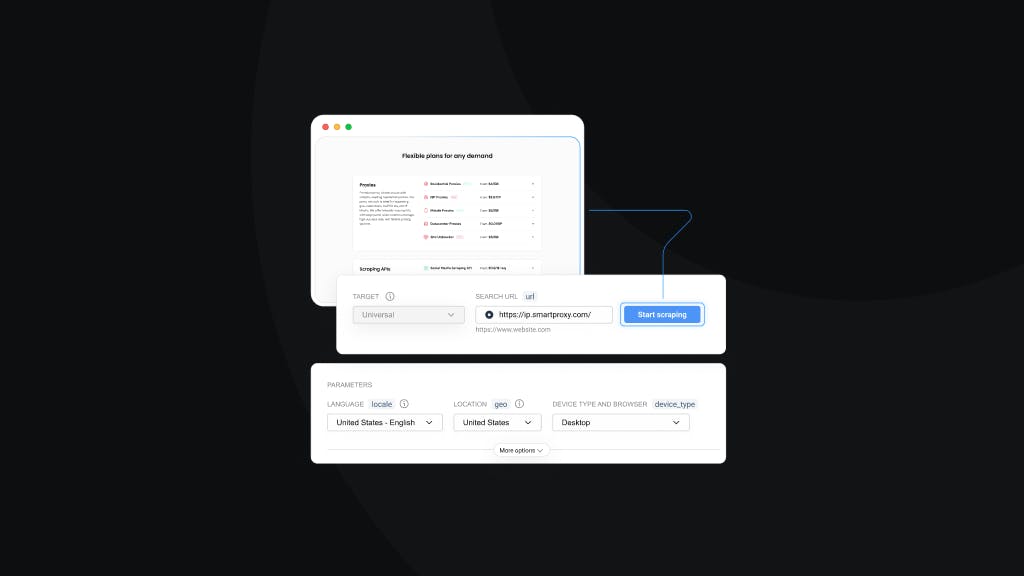

In today’s digital era, businesses can access relevant public data to reach their goals. But here’s ...

Vilius Sakutis

Apr 19, 2024

3 min. read

Let’s be honest, a headless browser sounds, to say the least, peculiar if you haven’t heard the term...

Mariam Nakani

Apr 03, 2024

5 min. read